Maximizing revenue in online advertising

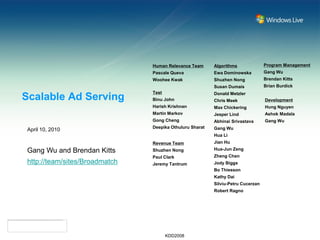

- 1. Human Relevance Team Algorithms Program Management Pascale Queva Ewa Dominowska Gang Wu Woohee Kwak Shuzhen Nong Brendan Kitts Susan Dumais Brian Burdick Test Donald Metzler Scalable Ad Serving Binu John Chris Meek Development Harish Krishnan Max Chickering Hung Nguyen Martin Markov Jesper Lind Ashok Madala Gong Cheng Abhinai Srivastava Gang Wu Deepika Othuluru Sharat Gang Wu April 10, 2010 Hua Li Revenue Team Jian Hu Gang Wu and Brendan Kitts Shuzhen Nong Hua-Jun Zeng Paul Clark Zheng Chen http://team/sites/Broadmatch Jeremy Tantrum Jody Biggs Bo Thiesson Kathy Dai Silviu-Petru Cucerzan Robert Ragno KDD2008

- 2. The Ad Serving Problem Triger: Pageview from User Ad Response Banner Advertising KDD2008

- 3. The Ad Serving Problem Triger: Pageview from User Ad Response Ad Response Paid Search Advertising KDD2008

- 4. The Ad Serving Problem: Technical Challenge to do this at Scale! • Problem • Given any Trigger, respond with an Ad that maximizes Revenue… • Scale • For simple bayesian or codebook method, Scale = Triggers x Ads • 5 million x 9 million = 45 trillion possible pairs to evaluate for suitability • Speed • Ad serving should be completed in around 50 miliseconds. • Can’t store 45 trillion in memory. • Ad Serving Algorithm • Maintan a codebook of triggers and the ads that should be presented using Hash for rapid serving. Distribute hash across machines.. • Data mining problem • Come up with a good code-book to use the precious memory resource. KDD2008

- 5. The Ad Serving Problem: Definition • Given any Trigger, respond with an Ad that maximizes Revenue subject to some constraints • Constraints include: · Relevance: CTR > x · Storage limit: Number of code-book pairs < N · And lots more · Frequency capping · Sequence constraints · Competitive exclusion · Mainline Reserve constraints • Let’s have a look at Revenue…. Rev vrs Rel KDD2008

- 6. Revenue in the Ad Business Revenue = I r c k ,t k ,t k ,t Should we Serve Ad? (0 or 1) * Revenue per action rk,t * Probability of action ck,t KDD2008

- 7. Probability of Action (CTR) Revenue = I r c k ,t k ,t k ,t Global CTR = Pr(k) CTR of advertisement without condition / Popularity of advertisement. Conditional CTR = Pr(k|t) CTR of advertisement conditional upon trigger – basic historical performance Smoothed CTR = Smoothly vary between the two Feature-based Model Dtree, Linear Regression, etc = Disadvantage is that this requires some knowledge of the ads. Ad Serving 101 KDD2008

- 8. CTR Prediction Accuracy 1 Feature based 0.9 methods One could go model-less at 0.8 least for top 15% of data as measured by 0.7 conditional probabilities 0.6 and generate Conditional CTR fairly good 0.5 does well but results peters out 0.4 because we lack data Ad servers 0.3 purportedly use globalctr Global CTR does this history 0.2 surprisingly technique…. well….. smoothed 0.1 linearreg dtree 0 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 KDD2008

- 9. Revenue in the Ad Business Revenue = I r c k ,t k ,t k ,t Should we Serve Ad? (0 or 1) * Revenue per action rk,t * Probability of action ck,t KDD2008

- 10. Ad Serving: Solution Revenue = I r c k ,t k ,t k ,t • Greedy optimization: · Add Ik,t to code-book that have the highest expected revenue (meaning probability of action * payout for action) · Add while constraints are met. Constraints include. 1 2 3 Ik,t * rk,t * ck,t = dk,t -1 greedy allocation of trigger,ads to ad-server I k ,t rk ,t ck ,t = 10 -2 10 -3 Ik,t * rk,t * ck,t = dk,t 10 Sort predicted CTR -4 10 -5 10 Ik,t * rk,t * ck,t = dk,t -6 10 -7 10 0 0.5 1 1.5 2 2.5 3 number of trigger-ads being served 5 x 10 Pick highest E[Revenue] up to the capacity KDD2008 constraint

- 11. Some curious things about maximizing revenue…. KDD2008

- 12. Some curious things about maximizing revenue…. global CTR of expansion 0.26 0.24 CTR of ad 0.22 0.2 0.18 Each knot 0.16 is a decile of the 0.14 Property noted by Jensen and trigger-ad other authors: Tendency for population 0.12 relevance to be correlated with revenue – advertisers have to be Hey, what happened!?!? Might 0.1 highly relevant to offer to pay such advertisers with poor CTRs be high prices since otherwise they trying to make up for it by 0.08 pay for lots of non-converting clicks increasing their bid price? 0.06 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 Revenue per display KDD2008

- 13. Ad Serving Application • Use a lookup table to map to keyword-tagged advertisement. When a user types in “shoes”, map it to the keyword-tagged advertisement “nike sneakers” (for example). • The keyword tags and ad creatives are entered by the advertiser. • We can choose whether to add a code-book entry or leave it go KDD2008

- 14. Building it will be a piece of cake…. Not really! • 1 year to launch • 55 algorithms tested from 10 teams! Turned into a competition • Unexpected challenges including Porn, Trademark, Bad expansions, Editorial policy, Adoption and acceptance by internal teams KDD2008

- 15. Results • Implemented on Live.com search engine Paid Advertisements. • Data for 4 months analyzed in this paper, although system has been running for the past two years. • 3 billion impressions • Experimental test setup: · Test split randomly on Live search traffic · Control = Basic Ad Serving Algorithm · Experimental = Optimized Ad Serving Algorithm • Positive on all metrics including advertiser value, searcher value, adCenter performance, but required some work to achieve this KDD2008

- 16. Algorithms which are positive on both CTR and RPS Oct-Nov 2006 3.5% 3.0% 6 27 6 14 2.5% (Scale Removed) 2.0% CTR % 6 25 6 31 6 32 1.5% 6 11 6 15 1.0% 61 0.5% 64 6 17 5 22 0.0% 0.0% 0.5% 1.0% 1.5% 2.0% (Scale Removed) 2.5% 3.0% 3.5% RPS % KDD2008

- 17. Ad Serving Revenue vrs Control Smart vs Control Ad Serving Revenue versus Control May 2007 - Jan 2008 6.0% 5.7% 5.0% 4.1% (Scale Removed) 4.0% 3.0% 2.0% 0.7% 1.0% 0.0% RPS % RPBS % CTR % (CPBS) KDD2008

- 18. Ad Serving Revenue vrs Control Ad Serving Revenue versus Control Smartmatch Revenue vrs Control (Scale $30,000,000 removed) $25,000,000 $20,000,000 $15,000,000 $10,000,000 $5,000,000 $0 KDD2008

- 19. Algorithms in Public Domain Alg14 and Alg24 Jidong Wang, Hua-Jun Zeng, Zheng Chen, Hongjun Lu, Li Tao, Wei- Ying Ma. ReCoM: Reinforcement Clustering of Multi-Type Interrelated Data Objects. In Proceedings of the 26th annual international ACM SIGIR conference on Research and development in information retrieval (SIGIR'03), pp. 274-281, Toronto, Canada, July 2003. http://team/sites/Broadmatch/Shared%20Documents/p16477- wang.pdf Alg 11 Donald Metzler, Susan Dumais, Chris Meek, (2006), Similarity Measures for Short Segments of Text, preprint http://team/sites/Broadmatch/Shared%20Documents/MetzlerDumais MeekECIR07-Final.doc KDD2008

- 20. Conclusion • Greedy optimization method for maximizing Revenue or CTR. • Used very simple features, eg. CTR and Conditional CTR, as well as more complex ones we haven’t discussed. • Running live, at scale (7% US Traffic), with control groups • Revenue and Relevance generally correlated (as noted by Jensen and other authors), but very high revenue is not correlated with relevance. Inverted “U” Shaped function! Hypothesis: High revenue advertisers may be compensating for poor CTR by boosting their Prices as high as possible. • Conditional CTR and Global CTR are effective methods for predicting ad performance. They also avoid training. • Feature-based prediction most effective. KDD2008